In this issue:

I connected 4 MCP servers to my intelligence system. They loaded 114 tool definitions.

I connected Notion to read and write pages. It loaded 22 API operations. I use three.

Anthropic, the company that created MCP — just published a blog explaining why it bloats your context

Perplexity's CTO says they're moving away from MCP internally

What you should know, if you’re building right now

I was mid-workflow when I noticed it.

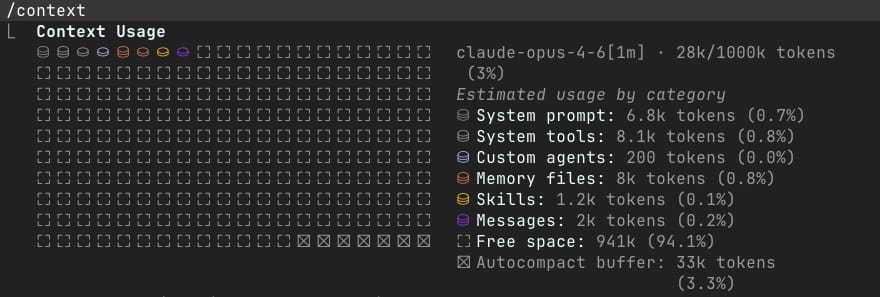

I'd asked Claude to research what's happening in the AI world this week — a routine task, three Perplexity searches running in parallel. The kind of thing my intelligence system does daily. When the results came back, I checked the context usage.

33,000 tokens. Gone. Just from search results.

That's not counting the tool definitions, the system instructions, the memory files, the CLAUDE.md that tells the system who I am and how I work. By the time I'd asked my first real question and run one round of research, nearly half my context window was consumed. Not by the conversation. Not by the thinking. By the plumbing.

The protocol responsible for all that plumbing? MCP — the Model Context Protocol. The thing that makes my entire system work.

And the thing that's slowly eating it alive.

The Protocol That Won Everything

If you're building anything with AI agents in 2026, you've already encountered MCP whether you know it or not.

Anthropic released the Model Context Protocol in November 2024 as an open standard for connecting AI systems to external tools. The pitch: instead of building custom integrations for every API, database, and service, you build one MCP server and any compatible client can use it. A universal adapter for the age of AI agents.

It worked. It worked fast.

By February 2026, MCP had crossed 97 million monthly SDK downloads. Every major AI provider — Anthropic, OpenAI, Google, Microsoft, Amazon — supports it. Anthropic donated the protocol to the Linux Foundation's Agentic AI Foundation, with all five of those companies as founding members. Thousands of community-built MCP servers now connect to everything from Salesforce to Kubernetes to your local file system.

MCP didn't just win. It became the TCP/IP of agent-tool communication — the layer everyone agrees on so that everything above it can be different.

I've been building on it for months. My intelligence system — the thing I use to research, write, build automations, manage clients, and teach — runs on MCP. It's how Claude connects to my n8n workflows, reads and writes to Notion, draws diagrams in Excalidraw, searches the web through Perplexity, manages my calendar, reads my email. MCP turned Claude from a chatbot into something that inhabits my work.

This isn't theoretical for me. I run my business on this protocol.

Which is why what I found when I started measuring matters.

Then the Room Got Crowded

Here's what my system actually looks like, in numbers.

I configured 4 MCP servers in my project — the ones I actually chose: n8n for workflow automation, Notion for my knowledge base, Excalidraw for diagrams, and Perplexity for search. Four connections. The tools I need.

But Claude Code also picks up MCP servers from other sources — claude.ai's native integrations for Gmail, Calendar, Gamma, Granola, plus browser automation through Chrome and Playwright.

The result: 10 MCP servers providing a combined 114 tools.

MCP Server | Tools |

|---|---|

Notion | 22 |

Playwright (browser) | 22 |

Chrome (browser) | 18 |

Excalidraw | 13 |

n8n | 11 |

Google Calendar | 9 |

Gmail | 7 |

Perplexity | 4 |

Gamma | 4 |

Granola | 4 |

That's the first thing to understand: MCP servers don't load individual tools. They load their entire surface area. Connect Notion and you don't get "read a page" and "write a page." You get 22 tools, every API operation the server exposes, from retrieving a single block to querying data sources to listing templates. Connect a browser automation server and you get every possible browser interaction: click, hover, drag, fill form, upload file, take screenshot, read console logs, intercept network requests.

Each tool comes with a full definition — name, description, parameter schema. And every one of those definitions loads into the model's context window at the start of every session.

22 tools from one Notion connection. I connected Notion so Claude could read and write pages. What loaded was 22 tool definitions — I use maybe three of those regularly.

40 browser automation tools. Chrome and Playwright both offer browser control. Both load completely. 40 tool definitions so Claude can click buttons on a webpage.

Those 114 tool definitions — just the descriptions of what each tool does and what parameters it accepts — consume roughly 17,000 to 34,000 tokens depending on how detailed the schemas are. I'm running Claude Opus 4.6 with a 1M token context window, so that's 1.7-3.4% — manageable. But most people aren't on a million-token window. On a standard 200K context, that's 8-17%. Before you've typed a single word.

But tool definitions are just the opening act.

Add my CLAUDE.md (the file that tells Claude who I am, how I work, and what projects I'm running): ~5,200 tokens. My memory file: ~2,100 tokens. System instructions, skill references, git status: another ~7,700 tokens.

Total context consumed before my first message: roughly 25,000 to 30,000 tokens.

On my 1M context window, that's 2.5-3% — it sounds fine until you realize what happens next. On a 200K window, which is what most models and plans offer, that's 17-27% gone before you start.

Then I start actually using the tools.

A single Perplexity search returns 10,000 to 11,000 tokens. Not because I asked for that much. Because the MCP server returns full content blocks — titles, URLs, snippets, extracted page text, dates. There's no way to say "just give me the headlines." The protocol doesn't have a concept of granularity. You get everything, every time.

Three parallel searches — which is what I ran at the start of this session — consumed roughly 33,000 tokens of results.

After one round of research, my context looked like this:

What | Tokens | % of 1M context |

|---|---|---|

Tool definitions (114 tools) | ~25,000 | 2.5% |

System files (CLAUDE.md, memory, etc.) | ~15,000 | 1.5% |

Research results (3 searches) | ~33,000 | 3.3% |

Conversation so far | ~14,000 | 1.4% |

Total | ~87,000 | 8.7% |

As the CodeRabbit team put it: "Most of us are now drowning in the context we used to beg for."

Under 9% after one research round. That sounds fine — until you realize this is on a 1M token context window, which I'm running on Opus 4.6. Most people aren't on that. On a standard 200K window, that same 87,000 tokens is 43.5% — nearly half the context consumed after a single round of research. And I hadn't started writing yet.

Here's the thing that makes this especially pointed: I chose 4 MCP servers. I ended up with 114 tool definitions. And of those 114, I used maybe five or six in this session. Perplexity search. A few file reads. That's it. The other 100+ tools — Excalidraw's 13 drawing tools, Calendar's 9 event tools, Notion's 22 API operations, both browser automation suites — sat there consuming tokens for capabilities I never touched.

It's like hiring a contractor to install a kitchen faucet and they show up with the entire hardware store. Every wrench, every pipe fitting, every valve type — laid out across your counter, taking up space, before they pick up the one tool they need.

It's Not Just Me

I'd been assuming this was a configuration problem. Maybe I connected too many servers. Maybe I should be more selective. Then I started seeing the same observation from people operating at a very different scale.

At the Ask 2026 conference on March 11, Perplexity's CTO Denis Yarats said something that landed hard: his company is moving away from MCP internally. The reason? Tool descriptions consume 40 to 50 percent of available context windows before agents do any actual work. Not 10%. Not 20%. Half.

Y Combinator CEO Garry Tan went a different direction entirely — he built a CLI instead of using MCP.

And then Anthropic, the company that created MCP — published a blog post that essentially confirmed the problem. "Code Execution with MCP: Building More Efficient AI Agents" identifies two patterns that "increase agent cost and latency":

Tool definitions overload the context window

Intermediate tool results consume additional tokens

Their example: connecting just a handful of MCP servers and loading all tool definitions upfront consumes 150,000 tokens. On a 200K window — which is still what most people work with — that's 75% gone before any work begins. Even on a 1M window, that's 15% consumed by tool descriptions alone.

Cloudflare reached the same conclusion independently. Their team found that agents handle more tools, and more complex tools, when those tools are presented as TypeScript APIs rather than being directly loaded as MCP tool definitions.

The emerging consensus, as one weekly AI roundup put it: "MCP fits dynamic tool discovery well, but production teams are reaching for traditional APIs and CLIs when context efficiency matters."

MCP solved the connection problem. It created a context problem.

The Fix Is Already Here (Sort Of)

The good news: nobody is pretending this isn't happening. And the proposed solutions are already being built.

Anthropic's answer: code execution. Instead of loading all 114 tool definitions into context upfront, the agent discovers tools on demand by browsing a filesystem of available MCP servers. It reads only the tool definitions it needs, writes code to call them, and processes results in a sandboxed execution environment before returning anything to context.

The result in their testing: 98.7% reduction in token usage — from 150,000 tokens to 2,000.

That's not incremental. That's a different architecture.

Cloudflare's "Code Mode" takes the same approach. Present MCP servers as code APIs. Let the agent write code to interact with them. Keep intermediate results out of the model's context window. The agent only sees what it explicitly logs or returns.

MCP v2 beta, which dropped March 13, starts addressing some of the protocol-level gaps. The biggest addition: resources as first-class protocol entities, separate from tools. A database schema, a user's calendar, a product catalog — these are now distinct from tool definitions. This separation reduces prompt bloat by letting agents understand what data is available without loading every tool that could act on it.

The v2 beta also introduces elicitation — a standardized way for agents to pause, ask questions, and collect missing context before proceeding. Instead of hallucinating a value or returning an error, the agent can "ask back" through a structured schema. It's a small change that signals a bigger shift: MCP is evolving from a request/response protocol into something closer to a dialogue protocol.

And then there's the 2026 roadmap. Published by the MCP core maintainers, it's ambitious. Four priority areas: scaling transport for horizontal deployments, closing lifecycle gaps in the Tasks primitive, building enterprise features (audit trails, SSO), and the transformative bet : agent-to-agent communication.

The vision: MCP servers stop being passive tool providers. They become autonomous agents themselves. A research agent delegates to a search agent, a summarization agent, a citation agent. Each manages its own context. Each reports back through structured task lifecycles. The central model coordinates but doesn't micromanage — and critically, doesn't hold every intermediate result in its own context window.

This is where the protocol needs to go. The question is how long the gap lasts between where it is and where it's headed.

What This Means If You're Building

I've seen this pattern before.

A decade ago, I was at Microsoft migrating Fortune 100 companies to Office 365. The protocol, or in that case, the platform had won. Exchange Online was the future. Every CTO agreed. The migration path was clear.

What nobody told them was that moving to the cloud would surface every architectural shortcut they'd made over the previous twenty years. Mismatched permissions, orphaned mailboxes, undocumented relay rules, third-party integrations that nobody understood but everyone depended on. The migration worked. And then the real work started — learning to operate, optimize, and govern the thing you'd just adopted.

MCP is in that phase right now. The protocol won. The migration happened. Everyone is connected. And the operational reality is catching up to the adoption curve.

Developer Jamie Duncan nailed the analogy: "Treating context windows as infinite resources creates unsustainable systems, just as infinite dependency installation historically created bloated software."

Here's what I'm doing about it in my own system — not hypothetical advice, but actual changes I'm making:

Think in context budgets. Every MCP server you connect has a cost. Not in dollars — in tokens. Notion's 22 tools cost me roughly 3,000+ tokens of context. If I'm not using Notion in a session, that's 3,000 tokens of thinking capacity I've traded for a capability that sits idle. Multiply that across 10 servers and the tax adds up fast. I'm starting to think about tool connections the way I think about subscription costs: what's the ROI per session?

Audit your auto-loaded servers. I configured 4 MCP servers. I ended up with 10. The extras came from platform integrations I didn't explicitly choose. Two browser automation suites doing the same job. Services I rarely use loading their full tool surface on every session. This is how bloat happens in any system. You don't plan for it. You accumulate it.

Watch what comes back. The Perplexity MCP is incredibly useful. It's also incredibly verbose. One search returns 11,000 tokens because it has no concept of "light" versus "detailed" results. Until MCPs offer response granularity, I need to be selective about when I invoke tools that return large payloads — or move research-heavy workflows into sub-agents that don't share the main context window.

Know when MCP is the wrong tool. Not everything needs a protocol. A recent benchmark by Scalekit found MCP costs 4 to 32x more tokens than a CLI for identical operations. If your agent is calling a well-known tool with a stable interface, a direct API call or CLI command might be the better choice. MCP shines when you need dynamic discovery, when the agent doesn't know in advance what tools are available. For everything else, the simpler path is usually the cheaper one.

Prepare for the architecture shift. Anthropic's code execution approach and Cloudflare's Code Mode aren't optional upgrades. They're the direction. The current pattern — connect 4 servers, inherit 114 tools, hope for the best — is temporary. The future is on-demand tool discovery, sandboxed execution, and agents that manage their own context. Building with that trajectory in mind means the transition will be smoother when it arrives.

Open Questions

What's the right number of MCP tools per session? My system loads 114. I use 5-6. There's a sweet spot somewhere — enough tools to be useful, few enough that context isn't dominated by capability descriptions. I don't know where that line is yet.

Should MCP servers support response granularity? A "compact" versus "detailed" response mode would change the economics dramatically. A Perplexity search that returns titles and URLs at 500 tokens versus full content at 11,000 tokens is a 22x difference. The protocol doesn't support this today.

How do we measure context efficiency? We have benchmarks for model capability. We don't have benchmarks for how efficiently an agent uses its context window. A system that uses 45% of context on overhead before starting work is fundamentally different from one that uses 5%. That difference should be measurable, visible, and optimizable.

When code execution becomes the norm, what happens to the ecosystem? Thousands of MCP servers were built for the current architecture — load definitions, call tools directly. The shift to on-demand discovery and code-based invocation means many of those servers will need to be rebuilt or wrapped. That's a migration. Migrations are never as smooth as the blog posts suggest.

This dispatch was written using the system described in it — Claude Code with 11 MCP servers connected, context budget included. The Perplexity searches that informed the industry sections consumed approximately 33,000 tokens. You're reading the output of a system that was, at this point, operating on about half its available context.

That’s it Folks

Thanks for reading through.

I’d love to know how you felt about today’s newsletter. This will help me make the newsletter better.